Evaluation Planning – 2.04 Lifecycle Analysis

Programs change over time. In fact, like organisms, programs can be viewed as progressing through lifecycle stages: they are initiated (born); they typically go through phases of rapid change and growth; they may stabilize and become more “settled”; they may be disseminated widely; and at any point along the way they may be retired or replaced. Integrating principles from both systems theories and evolutionary theories, the SEP was explicitly designed to identify where a program is in its lifecycle and to encourage a progression through evaluation phases appropriate to its lifecycle phase.

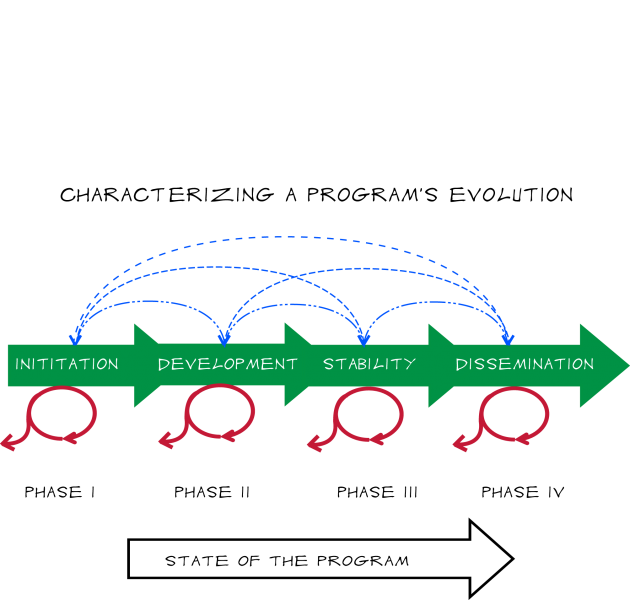

Figure 5 offers a way of characterizing a program’s evolution. The “State of the Program” arrow emphasizes that it is not just the passage of time that marks a program’s evolution. Decisions are made throughout a program’s lifecycle by program staff, organization leadership, key funders and others. Over time, these decisions contribute to a substantive progression that includes refinement and stabilization of program content and approach (reducing the variability of the program from one round to the next as a program settles into its essential components). In other words, as the state of the program moves from left to right, the internal stability of the program increases. This progression in the state of the program also reflects decisions that are made along the way about a program’s expansion, continuation, or contraction and also generally reflects a move from smaller-scale pilot trials of a program to more widespread use.

Figure 5 – Phases in Program Lifecycle

For practical purposes we define four broad lifecycle “phases”: initiation; development; stability; and dissemination. In actuality, program evolution is a continuous and dynamic process. In fact, a program is never “done evolving.” Moreover, an individual program’s evolution is not always linear. The iterative, “regroup-and-try-again” possibilities symbolized in the blue dashed lines are realistic (and important) paths. There can be “backward” reversions to an earlier phase at any point in a program’s lifecycle, even for mature programs. Programs may also stay in one phase or move incrementally within it for some time (symbolized by the red circles); and they may be retired at any point (the exiting red arrows).

Each iteration of a program is related to the program’s prior history but is also shaped by decisions based on new information about how and how well the program works, and about what is needed by the target audiences or community; and by purely external factors like funding availability. The process of evolution involves learning, changing, and ultimately strengthening the larger system as a program is run, evaluated and revised and re-run over time.

The process of program evolution through lifecycle phases is driven, in part, by evaluation. Information gathered through evaluation can be used to make positive changes to a program’s implementation and scope, pushing the program forward – and sometimes backward – through the lifecycle stages. Accompanying and supporting the program’s evolution is a similarly evolving pattern of evaluation activities. A key tenet of the SEP is that there are lifecycles in evaluation as well, and that for any given program lifecycle phase or state of the program, there is an appropriate evaluation lifecycle phase. These lifecycle phases are defined in more detail below.

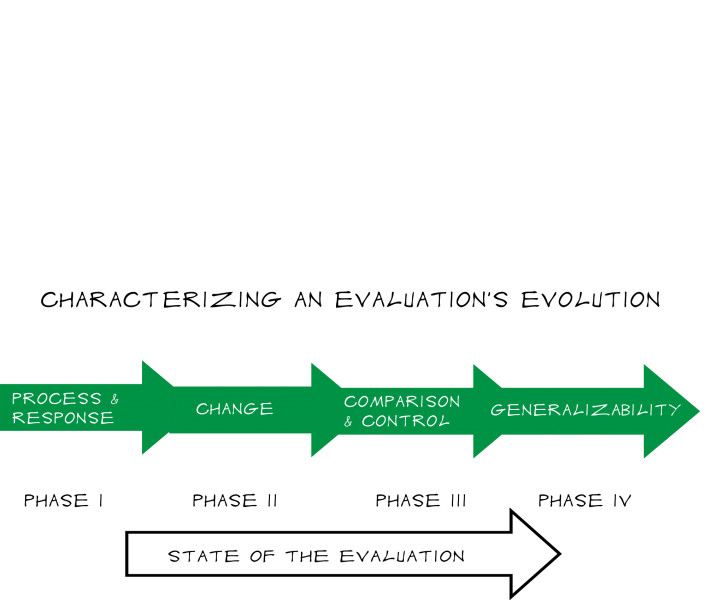

Figure 6 provides an image for evaluation lifecycle phases, analogous to the program lifecycle phases in Figure 5 above. The “State of the Evaluation” is a synthesis of the multiple dimensions of a program evaluation. Movements from left to right in this figure correspond to potential increases in the scope and/or intensity of the evaluation effort. We distinguish four broad phases, delineated according to their basic purpose or goals. Early phase evaluations focus on how well the program is being implemented and how participants are responding to it; evaluations in the next phase assess change associated with program participation; evaluations with more elaborate comparison and control group designs allow for examination of causality, and the fourth phase examines how generalizable the program’s results are likely to be to other contexts and settings.

Figure 6. Phases in Evaluation Lifecycle

Alignment

Alignment between program and evaluation lifecycle phases is essential for ensuring that programs obtain the kind of information that is most needed at any given program lifecycle phase, and that program and evaluation resources are used efficiently. New programs should generally be doing process and implementation evaluations and basic satisfaction surveys rather than more controlled pre-post assessments. These programs are still changing a great deal, and need basic rapid feedback that can be incorporated into the next round of implementation.

Using sophisticated outcome evaluation strategies on a program that’s still in an early lifecycle phase is more than just a waste of resources. Outcome evaluation for an early phase program might happen to yield favorable results, but since the program is still changing considerably this seemingly favorable outcome might not hold up in subsequent rounds of the program, and could lead to an overinvestment in something that has not yet stabilized. The opposite risk is also significant: early outcome evaluations might show poor results and lead to the premature cancellation of a program that actually has great promise but needs to have some basic weaknesses resolved.

On the other hand, managers of a mature, consistently-presented and well-received program typically need to make decisions about whether to re-commit or even expand the resources being devoted to it. At that phase it is critical to evaluate program outcomes, and obtain evidence of change associated with or possibly caused by the program. This program might not really be attaining its intended outcomes and should be retired or substantially revised in order to meet a community need; alternatively it may be an extremely valuable program that is not being disseminated as widely as it should be, because it cannot build a strong enough case to funders. Without appropriate evaluation, program resources will not be allocated as well as they could be. Questions of participant or facilitator satisfaction alone simply would not serve the program well.

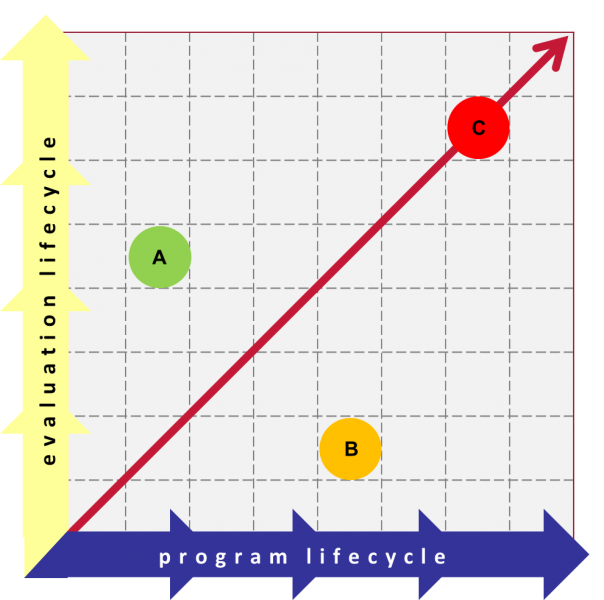

Figure 7 offers a simple representation of what alignment and non-alignment of program and evaluation lifecycle looks like, using the sequential lifecycle phases we have established above. Program C in this illustration lies on a 45-degree line, indicating ideal alignment between its program and evaluation lifecycle phases.

Figure 7: Lifecycle Alignment

In practice it is very common to have programs whose program and evaluation lifecycles are not aligned. Early phase programs such as Program A in Figure 7 may face stakeholder pressure to evaluate effectiveness through sophisticated outcome evaluations even though the program is still changing considerably from one round to the next. As described above, this pressured over-investment in evaluation may be using more resources than are warranted at this stage, and raises the risk of poor program decisions. Program B may have been in place and stable for some time, but is using simple end-of-session satisfaction surveys. This under-investment in evaluation also raises the risk of poor program decisions. This non-alignment may simply be the consequence of being trapped in a rut of familiar routines, or it may be that the program is constrained with insufficient resources if their evaluation budget was underfunded. These are common and realistic scenarios. But misalignment can be costly and increases the risk of poor decisions about programs. Moving toward alignment should be treated as a key goal of the evaluation plan.

Program Lifecycle Definitions

Each program will follow its own unique path through a lifecycle. Any given program might move forward and backward through and between the phases as needed, although the general tendency will be to progress through the phases over successive implementations. We define four broad phases, each of which is then more finely separated into two parts:

1) Program Phase I: Initiation

A Phase I program is typically a new program that is just starting up or an existing program that has been overhauled and revised considerably and is being piloted in its new form. Programs in this initiation phase will almost inevitably go through revisions.

a) Phase IA programs are in their initial implementation(s) either as a newly conceived program or as an existing program adapted from another context or from basic research.

b) Phase IB programs have been through initial trials but are still relatively new and are still going through substantial changes or revisions to major parts of the program.

2) Program Phase II: Development

Programs are considered to be in the development phase when they have been implemented successfully one or more times and are still undergoing some refinement but, compared to those in Phase I, the scope and pace of change are much smaller.

a) Phase IIA programs are going through significant changes but some program elements have settled into consistent patterns.

b) Phase IIB programs are still going through change in some components, but most program elements are being implemented consistently.

3) Program Phase III: Stability

Programs are considered to be in the stability phase when they are being implemented consistently. Program planners and providers know what can be expected in implementing the program; there are relatively few surprises. Participant experiences are relatively consistent from one session to the next. The program has finalized its procedures and protocols.

a) Phase IIIA programs are being implemented consistently and have lesson plans or curricula to guide facilitators.

b) Phase IIIB programs have formal written procedures or protocols that make it possible for new facilitators working in that context to deliver the program consistently.

4) Program Phase IV: Dissemination

The dissemination phase is a period when the program is adapted for wider implementation while still adhering to the essentials of the program model. Logistical issues regarding support of the program over a broader range of circumstances are addressed. In short, dissemination phase programs are run at multiple new locations with new and diverse sites, staff and participants.

a) Phase IVA programs are being implemented in multiple sites in different contexts; adaptations to new contexts have been made in order to maintain the essential meaning of the program.

b) Phase IVB programs are in wide distribution, well beyond the initial context in which it was developed and used.

Most programs do not progress all the way through to the dissemination phase. In many cases, experience and evaluation will show that a program is not sustainable for various reasons. It is possible for evaluation to reveal that a program is not achieving the desired outcomes or that there are negative consequences. Perhaps the funding stream has dried up, or participation was too low to maintain the program. Many programs will be retired then revamped in order to try another approach, thus facing another cycle of growth and starting the process over again.

Evaluation Lifecycle Definitions

Evaluation lifecycle phases are distinguished according to the kinds of claims one would be interested in making in that phase, the corresponding evaluation methodology or design, and the quality of measures.

1) Evaluation Phase I: Process and Response

Evaluation in this phase emphasizes implementation and process assessment in order to provide rapid feedback that will be used to refine the program model, “debug” the program procedures, identify barriers to high-quality adoption, and assess participant response to the program.

a) Phase IA evaluations examine program implementation or process, and participant and facilitator satisfaction. These typically use documentation strategies, and post-only evaluation of reactions and satisfaction. Evaluations may rely more heavily on qualitative measures, with more open-ended questions for example, but quantitative measures are also used.

b) Phase IB evaluations are also typically process, implementation or satisfaction assessments, but they extend the evaluation scope to examine the extent to which selected outcomes are present or absent. Evaluations are post-only, quantitative or qualitative outcome measures are under development or are being adapted from other uses and their reliability is being established.

2) Evaluation Phase II: Change

This phase of evaluation emphasizes the assessment of changes in outcomes (e.g., knowledge, skills, attitude, behavior, performance) that occur in association with the program. The major distinction between the two sub-phases is where the change is being measured – within groups or within individuals.

a) Phase IIA evaluations typically involve unmatched pretests and posttests of outcomes and assessment of consistency (reliability) and validity of measurement. Change is assessed within groups, and may use quantitative or qualitative methods. Results tend to be utilized for management and accountability of the program.

b) Phase IIB evaluations typically consist of a pretest and posttest of outcomes matched at the level of the individual, using quantitative or qualitative methods. The matching allows for more precise analysis of patterns of change that may be occurring, and enables exploration of reliability and validity of measures. Because participant identification is necessary to match pre and post outcomes and results are increasingly used for public accountability, the required level of participant protection increases. Human subjects review and protection (informed consent, anonymity or confidentiality) is typically undertaken here and increasingly formalized.

3) Evaluation Phase III: Comparison and Control

The emphasis in this phase is on evaluating effectiveness – that is, whether the program is responsible for causing the observed changes in outcomes. Here, evaluation involves the use of comparison groups or variables and statistical controls for adjusting for uncontrolled factors. Phase III evaluation designs typically call for use of more sophisticated statistical analysis, so programs using Phase III evaluations may need the assistance of a data analyst or statistician.

a) Phase IIIA evaluations use design and statistical controls and comparisons (control groups, control variables or statistical controls).

b) Phase IIIB evaluations use controlled experimental or quasi-experimental designs (randomized experiment; regression-discontinuity) for assessing the effectiveness of the program.

4) Evaluation Phase IV: Generalizability

These in-depth and extensive program evaluations focus on how well programs dependably display consistent outcomes over an increasingly broad range of circumstances. Evaluations at this phase may include meta-analysis or synthesis across multiple sites and implementations, investigation of regional/national effects, and/or assessing program “generalizability.” Phase IV evaluation designs call for more sophisticated use of statistical analysis, so programs using Phase IV evaluations may need the assistance of a data analyst or statistician.

a) Phase IVA evaluations are multi-site integrated assessments yielding large data sets over multiple waves of program implementation.

b) Phase IVB evaluations present a formal assessment across multiple program implementations that enable general assertions about a program in a wide variety of contexts (e.g., meta-analysis).

Lifecycle Application

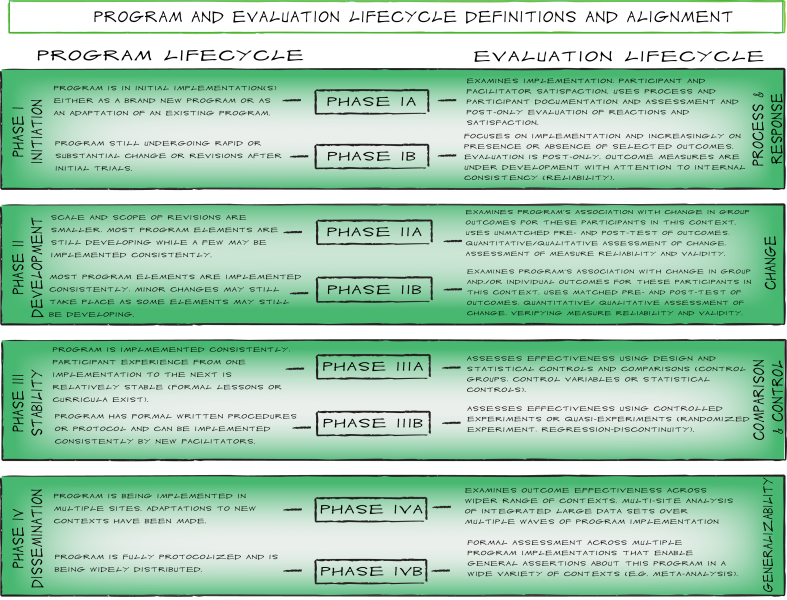

Figure 8 shows how the phases of these two lifecycles ideally are synchronized. That is, an evaluation should be appropriate for the lifecycle phase of a program. However, it is one thing to present these “ideal” phases as synchronized, and it is another thing entirely to make these phases “fit” what is occurring in a real-world program context. Your job as the Evaluation Champion is to facilitate the discussions – first regarding the program’s current lifecycle phase, and the current evaluation methods being used – then how best to work toward alignment and continue the developmental progression of the program. Alignment of program and evaluation lifecycles will not necessarily occur in just one cycle of evaluation (particularly if there is initially a large discrepancy between the program and evaluation lifecycles). Decisions about how quickly to work toward alignment will have to be weighed against the feasibility of different approaches as well as external pressures (i.e., funder mandates).

Figure 8

See Also:

Activity – Lifecycle Alignment Review

In the Course of a Lifetime – Ontogeny

The Flower and the Bee – Symbiosis and Co-Evolution

The Survival of Programs with “Fitness” – Evolution and Evaluation

Q&A

Q: What if my program and evaluation lifecycles are not aligned?

Misalignment is a very common problem. To address it well, it is important to understand more deeply what the consequences of misalignment are and how to communicate them to stakeholders. Alignment between program and evaluation lifecycle phases helps ensure that programs obtain the kind of information that is most needed at any given program lifecycle phase, and that program and evaluation resources are used efficiently.

New programs are still changing a great deal, and need basic rapid feedback about program process, satisfaction, etc. that can be incorporated into the next round of implementation. Focusing instead on longer-term outcome evaluation, for example, would use additional resources and introduces a risk of bad decisions: the outcome evaluation might happen to yield favorable results, but since the program is still changing considerably this seemingly favorable outcome might not hold up in subsequent rounds of the program, and could lead to an over-investment in something that has not yet stabilized. The opposite risk is also significant: early outcome evaluations might show poor results and lead to the premature cancellation of a program that actually has great promise but needs to have some basic weaknesses resolved.

On the other hand, for mature programs that are consistently-presented and well-received, additional and exclusive focus on basic feedback about program process or satisfaction would not serve the program well. These programs typically need evidence about effectiveness in order to make decisions about whether to re-commit or even expand the resources being devoted to it. Regardless of participant satisfaction and program stability, the program might or might not be achieving its intended outcomes. Without appropriate evaluation, program resources will not be allocated as well as they could be.

In cases of misalignment, you should work towards alignment as you develop this and future evaluation plans. How that should occur, and how long it will take, will depend on the particular program, reasons for misalignment, and stakeholder priorities.

Q: Can my program have an early lifecycle phase even if it has been around for a long time?

Yes it can. Program lifecycle phase is not just a matter of the passage of time. The definitions we use have to do with how much a program is changing from one round to the next. So a program that has been around for 30 years but is currently undergoing some big revisions in how it gets delivered or what it covers would be considered an early phase program – it’s in that new phase where you are shifting what works, trying new things, getting the “bugs” out. Similarly, a program that has been around for many years but is “always changing” would be considered a relatively early phase program. This might be the case with a program whose name remains the same, but whose delivery method switches significantly on an on-going basis – this might be a very adaptive program that is constantly changing in response to audience needs or desires. It might be adapting in sensible ways, but by its nature it isn’t settled enough to be considered stable and standardized. Keep in mind that there is nothing inherently good or bad about being in one lifecycle phase or another!

Q: How does my program’s evaluation lifecycle affect my current evaluation plan?

Knowing the evaluation lifecycle amounts to having an understanding of the “state of knowledge” of your program. This state of knowledge is an essential determinant of what kind of new knowledge the evaluation should attempt to build, in order to help the program evolve well.

For example, if there has been little or no evaluation done in the past on this program (it’s in an early evaluation lifecycle stage) then it will probably be best to begin building knowledge by conducting process-oriented and exploratory evaluations. If there have been years-worth of satisfaction surveys collected from participants, yet no evaluation of the association with desired outcomes, then it would make sense to expand the state of knowledge about the program by planning an evaluation that examines a few key outcomes.

Q: What should I do if my program doesn’t fit well into any of the lifecycle phase definitions?

In practice, the boundary between one phase and the next can be fuzzy and difficult to pin down. The value of this analysis is less about selecting the “right” box, and more about being thoughtful about where your program is in its evolution and where it needs to “go” next. Our general rule of thumb is to choose the lower phase if you really can’t decide where your program belongs. We also recognize that programs have many parts, and sometimes some parts of a program are stable and well-established, while others may be quite new and untried. It is difficult to assign a single lifecycle phase to the whole program in this case, so think in terms of assigning lifecycle phases to the individual parts.

Q: I only just got involved with my program so I don’t know its history. How can I figure out what phase it’s in?

It’s useful to know a program’s history in order to “see” where it is in its evolution, but you don’t have to know the history to figure out its current lifecycle phase. The definitions of program lifecycle phases are based on how much they are (currently) changing and being adjusted from one time to the next. The changes may be in terms of the scope of what the program includes, the kinds of audiences that are being reached or targeted, the way it is being delivered (formats, settings), and so on. The magnitude of the changes is a factor in the program lifecycle phase – we distinguish between big changes and substantial revisions, versus smaller fine-tuning changes that tend to be used as the program is converging toward a steady form. We also distinguish between changes that cover large parts of the program (earlier phase), and changes that are focused in on smaller segments (later phase).